Discourse in the global blockchain community has been characterized by idealism going back to Satoshi Nakamoto’s early writings on Bitcoin as a response to central banking. The line of reasoning is that systems that are vulnerable to corruption, or in any case cater to the wishes of a select few, could be made more accountable if they were governed by code. If that code lives on the blockchain, then it is impervious to biased intervention by minority parties.

Following that tradition, in a September 2013 blog post for Bitcoin Magazine, Vitalik Buterin explored the notion of the DAO. The article began as follows:

Corporations, US presidential candidate Mitt Romney reminds us, are people. Whether or not you agree with the conclusions that his partisans draw from that claim, the statement certainly carries a large amount of truth. What is a corporation, after all, but a certain group of people working together under a set of specific rules? When a corporation owns property, what that really means is that there is a legal contract stating that the property can only be used for certain purposes under the control of those people who are currently its board of directors—a designation itself modifiable by a particular set of shareholders. If a corporation does something, it’s because its board of directors has agreed that it should be done. If a corporation hires employees, it means that the employees are agreeing to 1See https://blog.ethereum.org/2014/05/06/daos-dacs-das-and-more-an-incomplete-terminology-guide/

© Vikram Dhillon, David Metcalf, and Max Hooper 2017

67

V. Dhillon et al., Blockchain Enabled Applications, https://doi.org/10.1007/978-1-4842-3081-7_6

provide services to the corporation’s customers under a particular set of rules, particularly involving payment. When a corporation has limited liability, it means that specific people have been granted extra privileges to act with reduced fear of legal prosecution by the government—a group of people with more rights than ordinary people acting alone, but ultimately people nonetheless. In any case, it’s nothing more than people and contracts all the way down.

However, here a very interesting question arises: do we really need the people?2

Three years after Buterin’s article was first published, The DAO came into existence as a smart contract written in Solidity, perhaps the purest manifestation of this idealism. Despite its canonical label, The DAO

was not the first—or the last—decentralized autonomous organization. In fact, by May 2016 when the leadership at Slock.it kicked off The DAO’s record-breaking initial coin offering (ICO), DAOs were well established as the third wave of the increasingly mainstream blockchain phenomenon.3

Although many people consider Bitcoin to be the very first DAO, there were drastic differences in the nature of the two services. Although it is true that Bitcoin was governed by code shared by every miner in the network, Bitcoin doesn’t have an internal balance sheet, only functions by which its users can exchange value. Although other DAOs at the time did have a concept of asset ownership, what made The DAO unique was that central to its code were the radically democratic processes that defined how The DAO would deploy its resources. It was a realization of Buterin’s concept of a corporation that could conduct business without having a single employee, let alone a CEO.

From the DAO white paper:

This paper illustrates a method that for the first time allows the creation of organizations in which (1) participants maintain direct real-time control of contributed funds and (2) governance rules are formalized, automated and enforced using software. Specifically, standard smart contract code has been written that can be used to form a Decentralized Autonomous Organization (DAO) on the Ethereum blockchain.

Buterin talked about the balance between automation and capital in the context of what sets decentralized organizations apart from traditional companies. Paul Kohlhaas from ConsenSys presented Figure 6-1 to illustrate where DAOs fall on the spectrum of autonomous organizations.

2See https://bitcoinmagazine.com/articles/bootstrapping-a-decentralized-autonomous-

corporation-part-i-1379644274/

3To put this in perspective, 15 days into the DAO’s crowdsale, members of the MakerDAO subreddit were discussing proposals that would trigger an investment in MakerDao by the DAO.

68

DAO Quadrant

Internal capital

No internal capital

Automation at

Humans at

Automation at

Humans at

the edges

the edges

the edges

the edges

Automation at

AI

Automation at

DAOs

the center

Daemons

DAs

(holy grail)

the center

DOs

DCs

Robots

Humans at

Boring old

Web

Humans at

(e.g. assembly

Forums

the center

organizations

the center

line)

services

Tools

Figure 6-1. DAOs as automation-powered decision-making entities with human participants In essence, DAOs are a paradigm shift from automated entities that previously contained no capital.

Using a blockchain allows us to infuse capital and build hybrid business models where we can fine-tune the degree of automation for specific use cases.

The Team

As often is the case in the blockchain world, much confusion surrounds the nature of the relationship between the employeeless DAO and the humans who wrote and maintained The DAO’s code. Those humans, in the case of The DAO, were led by the top brass at Slock.it, a German company set on disrupting the sharing economy by way of a technology they called the Universal Sharing Network (USN).

Christopher Jentzsch, Slock.it’s CEO, and Stephan Tual, the company’s COO, held senior positions at the Ethereum Foundation (Lead Tester and CCO, respectively) prior to starting Slock.it. Jentzsch was the primary developer of The DAO code, and Tual became the face of The DAO via blog posts, conference presentations, and forum contributions. So how would their current company benefit from the creation of a leaderless venture fund built on Ethereum? To understand their motivations we have to examine Slock.it’s vision to connect the blockchain to the physical world.

In building the USN, Slock.it set out to play a central role in mainstream adoption of IoT technology.

By providing a way to interact with devices on the network from anywhere in the world, the USN would, hopefully, become the backbone of a hyperconnected world, where your property can be rented out to other people without the need for centralized companies like Uber and Airbnb. Instead, the USN would provide an interface to the Ethereum blockchain, where decentralized applications can govern the transactions that make up the sharing economy.

The company intended to build a specialized modem, called the Ethereum Computer, for connecting IoT devices to the USN. Slock.it’s vision for The DAO was to create a decentralized venture fund that would invest in promising proposals to build blockchain-supported products and services.

69

Chapter 6 ■ the DaO haCkeD

At the time of writing (18 months after The DAO’s ICO), Slock.it has raised $2 million in seed funding to continue developing the USN and the Ethereum Computer. According to Tual’s blog posts on the company web site, Slock.it will now make the Ethereum Computer available as a free and open source image for popular system-on-a-chip (SoC) systems such as Raspberry Pi. The company also built and supports Share&Charge, a service that lets owners of electric vehicle charging stations sell their power to electric vehicle owners via a blockchain-powered mobile app.

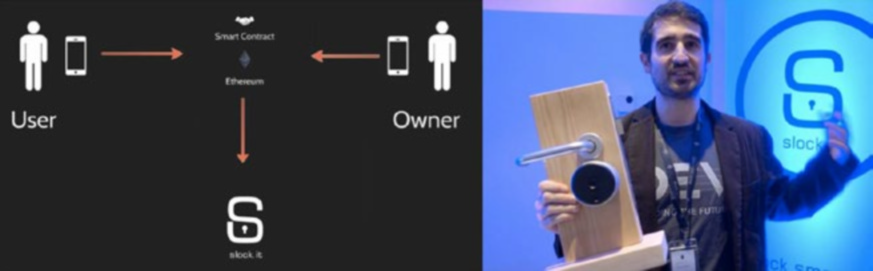

Jeremiah Owyang from Crowd Companies summarized one of the main use cases of Slock.it in a slide shown in Figure 6-2.

Slock.it smart locks link to secure Ethereum

contracts on the blockchain

Figure 6-2. Slock.it can act as a decentalized Airbnb by linking the purchase of a physical device (a smart lock) to a smart contract

Ultimately, this idea was expanded to become a decentralized IoT platform, where any device could be connected to the blockchain.

The DAO

The original conception of The DAO was not the radical experiment in democratic business processes that was eventually released at its ICO. Jentzsch described the process on the Slock.it blog: In the beginning, we created a slock.it specific smart contract and gave token holders voting power about what we— slock.it— should do with the funds received.

After further consideration, we gave token holders even more power, by giving them full control over the funds, which would be released only after a successful vote on detailed proposals backed by smart contracts. This was already a few steps beyond the Kickstarter model, but we would have been the only recipient of funds in this narrow slock.it-specific DAO.

70

We wanted to go even further and create a “true” DAO, one that would be the only and direct recipient of the funds, and would represent the creation of an organization similar to a company, with potentially thousands of Founders.4

To achieve this decoupling of Slock.it and The DAO, Jentzsch designed a Solidity contract that would allow any DAO token holder to make proposals for how The DAO’s resources should be handled. All token holders could vote on active proposals, which had a minimum voting period of 14 days.

That meant that once The DAO’s ICO was complete, Slock.it would have to submit a proposal to The DAO just like anyone else. Other users could use the Mist browser to evaluate the proposal. This is how proposals were structured:

struct Proposal {

address recipient;

uint amount;

string description;

uint votingDeadline;

bool open;

bool proposalPassed;

bytes32 proposalHash;

uint proposalDeposit;

bool newCurator;

SplitData[] splitData;

uint yea;

uint nay;

mapping (address => bool) votedYes;

mapping (address => bool) votedNo;

address creator;

}

As you can see, proposals—the core of this automaton code base that would quickly raise $150

million—were relatively simple requests for The DAO’s resources (uint amount).

Any DAO token holder could vote on proposals by calling the vote function:

function vote(

uint _proposalID,

bool _supportsProposal

) onlyTokenholders returns (uint _voteID);

The votes from any one address would be weighted proportional to the amount of DAO tokens held at that address. If token holders wanted to vote for two separate positions, they could transfer the amount of tokens they wanted to vote with to another address, and vote again from there.5 Any tokens voting on an open proposal were locked (could not be transferred) until the end of the voting period.

The uint proposalDeposit was the deposit (in wei) that creators of a proposal had to stake on the proposal until the voting period closed. If the proposal never reached quorum, the deposit would remain with The DAO.

4https://blog.slock.it/the-history-of-the-dao-and-lessons-learned-d06740f8cfa5

5This could be the case because proposals required a quorum of 20 percent of votes to have weighed in on a proposal for the vote to be valid.

71

There were two special types of proposals that did not require deposits that would play a key role in the fate of The DAO. The first type was a proposal to split The DAO, effectively withdrawing the funds of the recipient of the proposal into a new “child” DAO, which was a clone of the original, but at a new contract address. Split proposals had a voting period of 7 days, instead of 14, and anyone who voted yes on a split proposal would follow the recipient, withdrawing their tokens from the original DAO and moving them into the resultant child DAO.

The second special type of proposal was to replace the curator of The DAO. DAO curators were addresses set at the creation of The DAO and the creation of any child DAO that could whitelist recipient addresses, effectively serving as gatekeepers.6 If the majority vote no on a proposal to replace a curator, the yes votes can elect to stand by their decision, creating a new DAO with their chosen curator.

The ICO Highlights

The ICO for the initial DAO concept was an overnight success:

• It raised 12 million ETH (~$150 million).

•

Both Jentzsch and Tual admitted that they never expected their idea to be so successful.

The Hack

The idea that The DAO was vulnerable to attack had been floating around in the developer community. Vlad Zamfir and Emin Gün Sirer first raised the issue in a blog post calling for a moratorium on The DAO until the vulnerabilities could be addressed.7 Just days before the attack, MakerDAO cautioned the community that their code was vulnerable to an attack, and Peter Vessenes demonstrated that this vulnerability was shared by The DAO.8

These warnings prompted a now infamous blog post published on June 12, 2016 by Tual on the Slock.it web site titled “No DAO funds at risk following the Ethereum smart contract ‘recursive call’ bug discovery.”

Within a couple of days, fixes had been proposed to correct many of the known vulnerabilities of The DAO, but it was already too late. On June 17, an attacker began draining funds from The DAO.

The DAO attacker exploited a well-intentioned although poorly implemented feature of The DAO

that was intended to prevent tyranny of the majority over dissenting DAO token holders. From The DAO

white paper:

A problem every DAO has to mitigate is the ability for the majority to rob the minority by changing governance and ownership rules after DAO formation. For example, an attacker with 51% of the tokens, acquired either during the fueling period or created afterwards, could make a proposal to send all the funds to themselves. Since they would hold the majority of the tokens, they would always be able to pass their proposals.

To prevent this, the minority must always have the ability to retrieve their portion of the funds. Our solution is to allow a DAO to split into two. If an individual, or a group of token holders, disagrees with a proposal and wants to retrieve their portion of the Ether before the proposal is executed, they can submit and approve a special type of proposal to form a new DAO. The token holders who voted for this proposal can then split the DAO, moving their portion of the Ether to this new DAO, leaving the rest alone only able to spend their own Ether.

6Curators weren’t necessarily human gatekeepers. Gavin Wood “resigned” as a curator of The DAO to make a point that curation was merely a technical role and that the curator had no proactive control over The DAO.

7http://hackingdistributed.com/2016/05/27/dao-call-for-moratorium/

8http://vessenes.com/more-ethereum-attacks-race-to-empty-is-the-real-deal/

72

Unfortunately, the way that this “split” feature was implemented made the DAO vulnerable due a catastrophic reentrancy bug.9 In other words, someone could recursively split from the DAO, withdrawing amounts equal to their original ETH investment indefinitely, before the record of their withdrawal was ever recorded in the original DAO contract.

Here is the vulnerability, as found in the Solidity contract file DAO.sol:

function splitDAO(

uint _proposalID,

address _newCurator

) noEther onlyTokenholders returns (bool _success) {

...

// [Added for explanation] The first step moves Ether and assigns new tokens

uint fundsToBeMoved =

(balances[msg.sender] * p.splitData[0].splitBalance) /

p.splitData[0].totalSupply;

if (p.splitData[0].newDAO.createTokenProxy.value(fundsToBeMoved)(msg.sender) == false) //

[Added for explanation] This is the line that splits the DAO before updating the funds in the account calling for the split

...

// Burn DAO Tokens

Transfer(msg.sender, 0, balances[msg.sender]);

withdrawRewardFor(msg.sender); // be nice, and get his rewards

// [Added for explanation] The previous line is key in that it is called before

totalSupply and balances[msg.sender] are updated to reflect the new balances after the split has been performed

totalSupply -= balances[msg.sender]; // [Added for explanation] This happens after the split

balances[msg.sender] = 0; // [Added for explanation] This also happens after the split paidOut[msg.sender] = 0;

return true;

}

As shown here, The DAO referenced the balances array to determine how many DAO tokens were available to be moved. The value of p.splitData[0] is a property of the proposal being submitted to the DAO, not any property of the DAO. That, in combination with the fact that withdrawRewardFor is called before balances[] is updated, made it possible for the attacker to call fundsToBeMoved indefinitely, because their balance will still return its original value.

9Rentrancy is a characteristic of software in which a routine can be interrupted in the middle of its execution, and then be intiated (reentered) from its beginning, while the remaining portion of the original instance of the routine remains queued for execution.

73

Looking more closely at withdrawRewardFor() shows us the conditions that made this possible: function withdrawRewardFor(address account) noEther internal returns (bool success) {

if ((balanceOf(_account) * rewardAccount.accumulatedInput()) / totalSupply < paidOut[_

account])

throw;

uint reward =

(balanceOf(_account) * rewardAccount.accumulatedInput()) / totalSupply - paidOut[_

account];

if (!rewardAccount.payOut(_account, reward)) // [Added for explanation] this is the statement that is vulnerable to the recursion attack. We must go deeper.

throw;

paidOut[_account] += reward;

return true;

}

Assuming the first statement evaluates as false, the statement marked as vulnerable will run. There’s one more step to examine to understand how the attacker was able to make that the case. The first time the withdrawRewardFor is called (when the attacker had legitimate funds to withdraw), the first statement would correctly evaluate as false, causing the following code to run:

function payOut(address recipient, uint amount) returns (bool) {

if (msg.sender != owner || msg.value > 0 || (payOwnerOnly && _recipient != owner)) throw;

if (_recipient.call.value(_amount)()) { // [Added for explanation] this is the coup de grace

PayOut(_recipient, _amount);

return true;

} else {

return false;

}

PayOut() as written in the second if statement references “_recipient”—the person proposing the split. That address contains a function that calls splitDAO again from within withdrawRewardFor(), before the token balance at that address is updated. That created a call stack that looked like this: splitDao

withdrawRewardFor

payOut

recipient.call.value()()

splitDao

withdrawRewardFor

payOut

recipient.call.value()()

The attacker was therefore able to withdraw funds from The DAO into a child DAO indefinitely.

74

Chapter 6 ■ the DaO haCkeD

To recap, the attacker accomplished the following:

1.

Split the DAO.

2.

Withdraw their funds into the new DAO.

3.

Recursively called the split DAO function before the code checked to determine

if the funds were available.

This process is visually represented in Figure 6-3.

Figure 6-3. The process of iterative withdrawal

In Figure 6-3, we can see the iterative process visually. The original DAO is represented by A, and a sub-DAO is created in B. Then, a transfer function requests some funds be withdrawn from the original DAO in C. Finally, the funds are transferred to the new DAO created. This process is repeated again as new DAOs are created with each loop.

The Debate

The result was that the attacker was able to steal about 3.6 million ETH, worth about $50 million at the time of the attack. The DAO investors were left in an especially precarious position. Not only had The DAO been compromised, but if they tried to withdraw the funds into their own child DAO, the resulting contract would have the same vulnerabilities as the original.

75

However, The DAO investors weren’t the only ones with an interest in the outcome of this turn of events. The hype surrounding the DAO had reached the religious proportions predicted by Buterin back in 2014. Almost 5 percent of ETH in circulation at the time was invested in The DAO. That had a number of implications for the entire Ethereum ecosystem, and led to one of the most contentious debates in the short history of blockchains.

On one side of the debate were those looking to protect the fledgling Ethereum ecosystem from a malicious actor in possession of a nontrivial portion of the total ETH in circulation. They were not necessarily concerned with whether or not The DAO would survive, but ultimately wanted to ensure that Ethereum would survive as a reputable blockchain platform on which other DAOs could be built in the future. This was the disposition of Buterin and many of the core members of the Ethereum development team.

On the other side were those committed to the ideals of decentralization and immutability. In the eyes of many in this camp (we’ll call them the justice camp), the blockchain is an inherently just system in that it is deterministic, and anyone choosing to use it is implicitly agreeing to that fact. In this sense, the DAO

attacker had not broken any laws. To the contrary, the reentrancy attack used the software code that made up The DAO’s bylaws and turned it against itself.

The decentralization camp believed that rewriting the blockchain to roll back the attacker’s sequestration of ETH in child DAOs would compromise the integrity of the blockchain. The blockchain, according to this line of thought, was supposed to be immutable and without any central authority, including the Ethereum Foundation. They were concerned the moral hazard of a small group of people rewriting the blockchain could open the door to other interventions, such as selective censorship.

The two sides debated their positions passionately over social media and in news outlets. The process made famous the concepts of soft forks and hard forks. Forking blockchains—or any software code for that matter—was not new to Ethereum or The DAO, but it became the focus of the debate between the justice camp and the immutability camp.

Meanwhile, a group of white hat hackers were working around the clock to try and hack the hacker.

The white hat group consisted of people both for and against the hard fork, but they worked together, nonetheless, to perform some of the same attacks that had been identified before June 17 to move the stolen ETH into new contracts in hopes of returning it to its rightful owners.10

The white hat team reached out to people who had made significant investments in The DAO to raise money for stalking attacks, in which they could follow the attacker into new DAOs with greater funds than the attacker was able to withdraw, giving them majority voting rights in the resulting DAO.

The Split: ETH and ETC

On July 30, over 90 percent of the hashrate signaled its support for the fork. The DAO funds were returned to investors as though the organization had never existed. Sort of.

Opposition to the hard fork led to the emergence of Ethereum Classic (ETC), as a small portion of the community continued to mine the original Ethereum blockchain. These immutability fundamentalists were committed to the idea that blockchains represented a new, disruptive governance model.

The most visible member of the movement was Arvicco, a Russian developer using a pseudonym. In a July 2016 interview with Bitcoin Magazine, he characterized the disagreement in this way: By bailing out the DAO, the Ethereum Foundation is attempting to reach a shortsighted goal of “making investors whole” and “boosting confidence in Ethereum platform.” But they’re doing quite the opposite. Bailing out the DAO undermines two of the three key long-term value propositions of the Ethereum platform.11

10https://www.reddit.com/r/ethereum/comments/4p7mhc/update_on_the_white_hat_attack/

11https://bitcoinmagazine.com/articles/rejecting-today-s-hard-fork-the-ethereum-classic-project-

continues-on-the-original-chain-here-s-why-1469038808/

76

Despite the tenacity of this vocal minority in the Ethereum community, many people did not expect both versions of the blockchain to survive long term. Major exchanges and cryptoservices added support for ETC but many were skeptical of the long-term prospects of a platform that essentially duplicated Ethereum’s capabilities.

Erik Vorhees, founder and CEO of Shapeshift.io, expressed skepticism about ETC’s ability to remain relevant, but explained that, ultimately, he believed that the split was good for the blockchain ecosystem. In November 2016 he told Decentralize Today:

While this caused quite a bit of turmoil (still ongoing), it’s hard for me to say it was a failure. A division within the community has now been resolved, and since both camps were significant enough in size, we now have two Ethereums, for a while at least. It has actually made the community more peaceful, because instead of the two camps arguing over who is right, both of them can be “right” in their own way, and the market will decide whose product is actually better. I expect ETH to win out over ETC, but I have to admit ETC

has survived longer than I thought.

At the time of this writing, ETC continues to grow as a platform and as a community. Despite ETC

appreciating less rapidly than ETH, BTCC and Huobi recently announced that they would be adding the token to their exchanges. ETC developers have also accelerated their departure from Ethereum as a platform, with the release of Mantis, the first client built from scratch for ETC (as opposed to Ethereum’s Mist, Parity, and other clients).

The Future

When a technology fails after being hyped in a massive spotlight, it is incredibly difficult to recover the credibility of the ideas powering that technology. What does the future of DAOs look like? Any user investing in a DAO token should be cautious, but there have been massive security advances to a DAOs’ structure and governance. Interestingly, Paul also presented a new outlook of DAOs as the next-generation of automated VCs called Decentralized Fund Managers. According to Paul, DAOs represent a new class of financial asset management tools where a software can manage a fund that would normally be entrusted to traditional venture capitalists. By implementing software based management at its core, any profits made by a DAO are distributed directly to the token holders. The members of this new DAO are essentially investors, and they would be issued a new class of tokens that represent their holdings (or stake) and earnings. Ultimately, in a DAO, the members can guide how the funds are being allocated and what benefits are offered in return for investment. It would stand to reason that a DAO managing funds would operate in traditional VC cycles:

• The transition first cycle involves investing using the ETH funds

• The second cycle involves the management of a DAO into a next-generation

automated VC. The governance model can provide for new decision-making abilities

for early investors such as angel-syndicates.

We discuss the idea of artificial intelligence (AI) leading financial investments in the final chapter of the book.

77

Summary

The future of Ethereum is bright despite the DAO hack. With the emergence of Ethereum Classic and the incredible rate of new developments, the platform is pushing closer to maturity. It must be noted that Ethereum as a platform was not the cause of the vulnerability. In its nascent state, smart-contract code is bound to cause bugs such as this hack, which will result in better code-checking mechanisms and secure code-writing practices that can avoid such pitfalls. In the future, as a result of forks, we might end up with a consolidated single-currency platform just like before.

78

Ethereum Tokens:

High-Performance Computing

In the Ethereum ecosystem, transfer of value between users is often realized by the use of tokens that represent digital assets. Ether is the default token and the de facto currency used for transactions and initializing smart contracts on the network. Ethereum also supports the creation of new kinds of tokens that can represent any commonly traded commodities as digital assets. All tokens are implemented using the standard protocol, so the tokens are compatible with any Ethereum wallet on the network. The tokens are distributed to users interested in the given specific use case through an ICO. In this chapter, we focus our attention on tokens created for a very specific use case: high-performance computing (HPC). More precisely, we discuss a model of distributed HPC where miners offer computational resources for a task and get rewarded in some form of Ethereum tokens.

We begin our discussion with an overview and life cycle of a token in the network. Then, we dive into the first token, Ethereum Computational Market (ECM), which is the most generalized and comprehensive distributed processing system. ECM will be our standard model for HPC using tokens, and we will introduce concepts such as dispute resolution (firm and soft) and verifiability of off-chain processing with on-chain rewards. The second token we cover is Golem, which posits itself as the Airbnb for unused CPU cycles. The first release, called Brass Golem, will allow distributed rendering of 3D objects using Blender. Future releases will enable more advanced functionality such as processing big-data analytics. The third token we present is Supercomputing Organized by Network Mining (SONM), which extends the idea of fog computing to create an Ethereum-powered machine economy and marketplace. SONM has published a more technically oriented roadmap focused on neural networks and artificial intelligence. The initial use case would be to provide decentralized hosting to run a Quake server. The last token we talk about in this chapter is iEx.

ec, which leverages well-developed desktop grid computing software along with a proof-of-computation protocol that will allow for off-chain consensus and monetization of resources offered.

Tokens and Value Creation

To understand the concept of tokens, we first need to understand the context in which they operate, “fat”

protocols. At Consensus 2017, Naval Ravikant talked about the idea of “fat” protocols and the fundamental differences between Internet companies and the next generation of startups building on the blockchain.

Currently, the Internet stack is composed of two slices, a “thin” slice of protocols that power the World Wide Web and a “fat” slice of applications that are built on top of the protocols. Some of the largest Internet companies such as Google and Facebook captured value in the application layer, but then had to invent new protocols and an infrastructure layer to actually scale. When Internet companies reached that size, they had validated their core business model and had enough resources to allocate toward creation of new protocols.

© Vikram Dhillon, David Metcalf, and Max Hooper 2017

79

V. Dhillon et al., Blockchain Enabled Applications, https://doi.org/10.1007/978-1-4842-3081-7_7

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Blockchain-based companies operate on a different stack with a “thin” slice of application layer and a

“fat” slice of protocol layer. The value concentrates in the protocol layer, and only a fraction spills over to the application layer. Joel Monégro and Balaji S. Srinivasan proposed two probable reasons for the large interest and investment in the protocol layer:

• Shared data layer: In a blockchain stack, the nature of underlying architecture is such that key data are publicly accessible through a blockchain explorer.

Furthermore, every member of the network has a complete copy of the blockchain

to reach consensus on the network. A pertinent example of this shared data layer in

practical usage is the ease with which a user can switch between exchanges such as

Poloniex and Kraken. or vice-versa. The exchanges all have equal and free access to

the underlying data or the blockchain transactions.

• Access tokens: Tokens can be thought of as analogous to paid API keys that provide access to a service. In the blockchain stack, the protocol token is used to access the service provided by the network, such as file storage in the case of Storj. Historically, the only method of monetizing a protocol was to build software that implemented

the new protocol and was superior to the competition. This was possible for research divisions in well-funded companies, but in academia, the pace of research was

slower in the early days because the researchers creating those protocols had little opportunity for financial gain. With tokens, the creators of a protocol can monetize it directly through an ICO and benefit more as the token is widely adopted and others

build services on top of the new protocol.

Due to proper incentives, a readily available data sharing layer, and application of tokens beyond the utility of a currency, developers are spending considerable time on the underlying protocols to crack difficult technical problems. As a result, startups building on the blockchain stack will inevitably spend more time on the “fat” protocol layer and solve technical challenges to capture value and differentiate themselves from a sea of Ethereum tokens.

Recently, with more tokens up and coming in the Ethereum ecosystem, cross-compatibility has become a concern. To address this issue, a new specification called ERC20 has been developed by Fabian Vogelsteller. ERC20 is a standard interface for tokens in the Ethereum network. It describes six standard functions that every access token should implement to be compatible with DApps across the network.

ERC20 allows for seamless interaction with other smart contracts and decentralized applications on the Ethereum blockchain. Tokens that only implement a few of the standard functions are considered partially ERC20-compliant. Even partially compliant tokens can easily interface with third parties, depending on which functions are missing.

■ Note setting up a new token on the ethereum blockchain requires a smart contract. the initial parameters and functions for the token are supplied to the smart contract that governs the execution of a token on the blockchain.

80

Chapter 7 ■ ethereum tokens: high-performanCe Computing

To be fully ERC20-compliant, a developer needs to incorporate a specific set of functions into their smart contract that will allow the following actions to be performed on the token:

• Get the total token supply: totalSupply()

• Get an account balance: balanceOf()

• Transfer the token: transfer(), transferFrom()

• Approve spending of the token: approve(), allowance()

When a new token is created, it is often premined and then sold in a crowdsale also known as an ICO.

Here a premine refers to allocating a portion of the tokens for the token creators and any parties that will offer services for the network (e.g., running full nodes). Tokens have a fixed sale price. They can be issued and sold publicly during an ICO at the inception of a new protocol to fund its development, similar to the way startups have used Kickstarter to fund product development.

The next question we should ask regarding tokens is this: Given that tokens are digital, what do token buyers actually buy? Essentially, what a user buys is a private key. This is the analogy we made to paid API keys: Your private key is just a string of a characters that grants you access. A private key can be understood to be similar to a password. Just as your password grants you access to the e-mail stored on a centralized database like Gmail, a private key grants you access to the digital tokens stored on a decentralized blockchain stack like Ethereum.

■ Tip the key difference between a private key and a password stored on a centralized database is that if you lose your private key, you will not be able to recover it. recently, there have been some attempts to restore access to an account through a recovery service using a cosigner who can verify the identity of the user requesting recovery.

Ultimately, tokens are a better funding and monetization model for technology, not just startups.

Currently, even though base cryptocurrencies such as Ethereum have a larger market share, tokens will eventually amount to 100 times the market share. Figure 7-1 provides a summary of the differences between value creation for traditional Internet companies and companies building on the blockchain stack using Ethereum tokens. Let’s begin with our first token, which will serve as a model for all the tokens that follow.

Michael Oved at Consensys suggested that tokens will be the killer app that everyone has been waiting for: If killer apps prove the core value of larger technologies, Ethereum tokens surely prove the core value of blockchain, made evident by the runaway success that tokens have brought to these new business models.

We are now seeing a wave of launches that break tokens through as the first killer app of blockchain technology. Bitcoin, in many ways, is a “proof of concept” for a blockchain-based asset. The Ethereum platform is proving that concept at scale.

81

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Figure 7-1. An overviwew of a blockchain stack used by tokens

This model is based on Joyce J. Shen’s description of distributed ledger technology. The traditional Internet companies such as Google and Facebook created and captured value in the application layer by harvesting data that users generated. Blockchain companies have a different dynamic in terms of ownership and the technology available. The blockchain itself provides a mechanism for consensus and a shared data layer that is publicly accessible. Ethereum further provides a Turing-complete programming language and node-to-node compatibility through an EVM that can run the instructions contained in a smart contract.

Using smart contracts, a full application can be constructed to run on the blockchain and a collection of applications becomes a full decentralized service such as Storj.

82

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Ethereum Computational Market

ECM is a very simplistic and functional token for off-chain computations and the first token we consider in this chapter. It serves as a general model covering all the necessary features that an HPC token would need. ECM is designed to facilitate execution of computations off-chain that would be too costly to perform within the EVM. In essence, ECM is a decentralized version of Amazon’s EC2. The key technical advance in ECM is the computation marketplace, which allows one user (the customer) to pay another (the host) for executing an algorithm off-chain and report the results back to the customer. Additionally, to preserve the integrity of the computation being done off-chain, each algorithm also has an on-chain implementation. This on-chain component can be used to verify whether the submitted end result is indeed correct. This on-chain implementation also plays a role in resolving disputes. Let’s take a look at the life cycle of a request through ECM in Figure 7-2. ECM uses some terminology specific to the project for describing the life cycle of a request when it’s initially received to the final resolution. We employ some of this terminology in Figure 7-2, along with a few additional terms:

• Pending: Indicates when a request is received. Every request begins in the pending status.

• Waiting for resolution: A request for computation is submitted to the network, and a host computed the algorithm and is now reporting the result. This is a decision point for the customer: Either the answer will be accepted and the request moves to soft

resolution status, or the answer is challenged, in which case the request moves to

needing resolution state.

• Needs resolution: When an answer is challenged, this path is taken from the decision tree. The request is changed to needs resolution status and on-chain verification

component of the algorithm is required.

• Resolving: This is the interim period as on-chain computation is being performed for a request. The request will remain in this status until the computation has been completed.

• Firm vs. soft resolution: Once the on-chain computation has been completed, the request is set to the firm resolution status. If no challenges are made within a certain window of time after the answer has been submitted to the customer, the request is

set to soft resolution.

• Finalized: Once an answer is obtained either through soft or hard resolution, the original request can be set to finalized status. This unlocks the payment from the

customer’s end and allows the host to receive payment for off-chain computation.

■ Note the reader should understand that ethereum Computational markets is presented here as a generic model to highlight all the components of a hpC token. that’s why we go through the technical details of computing requests, on and off-chain processing, market dynamics and other concepts. in the later sections, all the generic components are replaced by well-thought-out mechanisms and features.

83

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Figure 7-2. Life cycle of a request as it goes through ECM

Each request state just described is broken down further into the workflow here. Once a task request is processed off-chain, there is a decision point concerning the final answer. If the customer challenges the answer provided by the host, the on-chain component of the algorithm will execute and the request will go through a few states of needing resolution. On the other hand, if no challenges are made, the request moves to soft resolution and is considered finalized. The host receives payment after the request has reached the finalized state.

84

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Now that we have an understanding of how a request gets processed in ECM, let’s talk about the marketplace and individual markets within that marketplace. The concept that we have referred to as an algorithm being computed off-chain is made from three separate contracts: a broker contract, a factory contract, and an execution contract.

• Broker: A contract that facilitates the transfer of a request from the customer to the host who will carry out the computation.

• Execution: A contract used for on-chain verification of a computation. This contract can carry out one cycle of on-chain execution in the event that the submitted answer is challenged.

• Factory: A contract that handles the deployment of the execution contract on-chain in the case of dispute resolution. This contract supplies relevant metadata such as

the compiler version required to recompile the bytecode and verify it.

The marketplace itself has verticals (markets within a marketplace) that execute specialized types of contracts and algorithms, creating usecase-specific HPC economics on the Ethereum blockchain using tokens. Figure 7-3 provides a visual summary of the three contracts that are a component of each market.

What is required to make a computation request on ECM? There are primarily two functions that create a request and provide all the necessary details. Here, the requestExecution function is used to create a request for computation and this function takes two arguments. First is a set of inputs to the function itself and second is the time window during which a submitted answer can be challenged before the request turns to soft resolved status. This time window is given in number of blocks, because blocks are created at a definitive time interval in Ethereum. Finally, this function also specifies the payment being offered for this computation. The getRequest function returns all the metadata regarding the request. The following are some of the relevant parameters returned from the metadata:

• address requester: The address of the customer that requested the computation.

• bytes32 resultHash: The SHA3-hash of the result from a given computation.

• address executable: The address of the executable contract that was deployed on-chain to settle a challenged answer.

• uint status: The status of a request, at a given time through the life cycle. It is an unsigned integer corresponding to the status of a request given by numbers 0

through 7.

• uint payment: The amount in wei that this request will pay (to the host) in exchange for completion of a computation. Wei is the smallest unit of Ether that can be

transferred between users, similar to Satoshis in Bitcoin.

• uint softResolutionBlocks: A time window given by number of blocks within

which a challenge to an answer must be submitted. If no challenges are submitted to

an answer in that interval, the request changes to soft resolution.

• uint requiredDeposit: Amount in wei that must be provided when submitting an

answer to a request, or by a challenger objecting a submitted answer. This deposit is locked until the request moves to finalized state and then is released back to the host.

85

Chapter 7 ■ ethereum tokens: high-performanCe Computing

It is important to note that in our discussion, a few tasks such as answer verification, conflict resolution, and challenges have required deposits. The deposits are used as a countermeasure to reduce the potential for Sybil attacks on the network. A Sybil attack is an attack where a single adversary is controlling multiple nodes on a network, and now, the adversary can manipulate PoW on the network.

Figure 7-3. Overview of a market in the marketplace

The three components in a market necessary for responding to a request by a user (customer) are the broker contract, an execution contract, and a factory contract. The broker contract facilitates a customer–

host interaction and the other two contracts play a role in dispute resolution. In the case of challenges to a submitted answer, the broker will initiate the deployment of the execution contract through its factory contract (also called FactorBase, shown with the red workflow), which consolidates the information and prepares for one-cycle execution of the algorithm. The gas necessary for execution will be taken from a deposit that the challenger is required to submit. We discuss the submission and challenge process next.

Next, we talk about the submitting an answer back to the customer and the challenge process.

Submitting an answer for a computation done off-chain is performed with the answerRequest function.

This function takes a unique ID of the request being answered as an input. The actual submission of a potential answer requires a deposit in Ether. This deposit is locked until the request has reached either a soft or hard resolution state. We discuss the importance of holding deposits from involved parties shortly. Once a request has been finalized, the deposit made by the host who submitted an answer is reclaimed, along with the reward for performing the computation. If a submitted answer does not fall within the expectations 86

Chapter 7 ■ ethereum tokens: high-performanCe Computing

of the request submitted by a customer, a participant can challenge the answer. This initiates an on-chain verification process that will execute one cycle of the computation within the EVM to verify whether the submitted answer is correct. If the submitted answer was found to be incorrect during on-chain computation, the host’s deposit will have had the gas costs of that computation deducted from it. The challenger will get a large portion of the reward from the customer’s deposit, and the dispute will be resolved.

For a request where a submitted answer has been challenged, the request moves to the needs resolution state. This is accomplished by calling the initializeDispute function, which serves as a transition between the point at which a challenge is made and the beginning of on-chain verification.

Now the broker contract will use a factory to deploy an executable contract initialized with the inputs for this request. The gas costs for calling this function and performing one-cycle execution are reimbursed from the challenger’s deposit. Throughout the resolving state on a request, the executeExecutable function is called until the on-chain verification has been completed. At this point, the request is moved to a hard resolution state and eventually finalized. The gas charges and reward system might seem complicated, but it follows a simple principle: The correct answer receives payment for the computation, and incorrect submitters must pay for gas during on-chain verification. Let’s recap:

• In the case of soft resolution, a host reclaims the initial deposit and a reward given by the customer for executing the computation.

• If there were no correct submitted answers, the gas costs for verification are split evenly among the users who submitted answers. The reward payment returns to the

customer who originally requested the computation.

• In the case of hard resolution, the incorrect host reclaims the remaining deposit after gas costs have been deducted (for on-chain verification). This host does not receive a reward for the computation.

• If a challenger wins hard resolution, they reclaim their deposit along with the reward payment. The gas costs are debited from the incorrect host’s deposit.

On-chain verification of a computation is by nature an expensive task due to gas costs. ECM is designed such that off-chain computation would be cheaper than running a task in EVM. From a technical standpoint, there are two types of on-chain execution implementations: stateless and stateful computations. Figure 7-4

shows a contrast between the two implementations.

Figure 7-4. Two models for factory execution contracts in ECM

87

Chapter 7 ■ ethereum tokens: high-performanCe Computing

An executable contract is stateless if the computation is self-sufficient in that it does not require external sources of data. Essentially, the return value from a previous step is used as the input for the next step.

Piper Merriam (the creator of ECM) proposed that stateless executable contracts are superior to stateful implementations for two main reasons: lower overhead while writing the algorithm and reduced complexity because the computation cycles are self-sustaining. An example highlighted by Piper is the Fibonacci sequence written as a stateless implementation with the algorithm returning the latest Fibonacci numbers as the input for the next cycle of execution. The overhead of writing this in a stateless form is very minor and there is no additional complexity of introducing local storage.

■ Tip for stateless contracts, the execution contract is identical to algorithm sent as a request to the marketplace for computation. here, on-chain verification would run the execution contract for one cycle and hosts would run it for as many cycles as necessary to obtain the final answer.

An executable contract is stateful if the computation is not self-sufficient in that it requires an additional data source and the return values from the previous step. This additional data source is often in the form of a data structure holding local storage. Let’s go back to our example of the Fibonacci sequence and make it stateful. To do this, the algorithm would store each computed number in the contract storage and only return the latest number to the algorithm for the next cycle of execution. Every step of execution would require the algorithm to search the last number stored to compute the next number. By including storage, now the algorithm will search through saved results and print out any desired Fibonacci sequence that has been computed. This reliance on local state is what makes this instance of the contract stateful. Stateful contracts also enable new and complex features such as using lookup tables and search, but also cause an increase in the complexity of the written algorithm.

During on-chain verification, a single cycle of the execution contract will be executed. However, a self-contained contract in stateless form will be executed efficiently and without any additional complexity.

In stateless contracts, each step executed inside an EVM is elementary so it falls within the gas limits of that virtual machine. On the other hand, some contracts require additional storage due to the complexity of execution. In such cases, a stateful contract is executed where local storage is bound to the EVM during the on-chain verification. This storage is temporary and only exists through the duration of the on-chain processing.

88

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Golem Network

Golem (https://golem.network/) is a decentralized general-purpose supercomputing network. In addition to being a marketplace for renting computational resources, Golem aims to power microservices and allow asynchronous task execution. As a technology stack, Golem offers both Infrastructure-as-a-Service (IaaS) and Platform-as-a-Service (PaaS) through a decentralized blockchain stack for developers. In Golem, there are three components that function as the backbone of a decentralized market:

• Decentralized farm: A mechanism to send, organize, and execute computation tasks from individual users known as requesters and hosts known as providers

(of computational resources). This provides the users competitive prices for tasks

such as computer-generated imagery (CGI) rendering and machine learning on

network access.

• Transaction framework: Golem has a custom payment framework for developers to monetize services and software developed to run on the decentralized network. This

framework can be customized to capture value in new and innovative methods such

as escrows, insurance, and audit proofs on the Ethereum blockchain.

• Application registry: Developers can create applications (for particular tasks) that take advantage of Golem as a distribution channel and a marketplace with new

and unique monetization schemes. These applications can be published to the

application registry, which essentially functions as an app store for the network.

Golem’s premise in building the marketplace is that not all members will request additional resources for extensive computation at all times. As such, the requesters can become providers and rent their own hardware to earn extra fees. Figure 7-5 provides an overview of how the three components in Golem work in sync. Currently, Golem inherits the integrity (Byzantine fault tolerance) and consensus mechanisms from Ethereum for the deployment, execution, and validation of tasks. However, eventually Golem will use fully functional micropayment channels for running microservices. This will allow users to run services like a note-taking app, website hosting, and even large-scale streaming applications in a completely decentralized manner. Several more optimizations are needed before Golem reaches a level of maturity, and currently the most pressing developments are concerning execution of tasks. Before Golem can execute general-purpose tasks, we need to ensure that the computation takes place in an isolated environment without privileges, similar to an EVM but expanded with more features. We also need whitelist and blacklist mechanisms along with digital signatures recognized in the application registry that allow providers to build a trust network and for users to run applications cryptographically signed by trusted developers. Additionally, a network-wide reputation system is necessary to reward the providers that have participated the most during computation tasks and also to detect a malicious node and mitigate tasks efficiently.

■ Note the first release dubbed Brass golem will only allow one type of task to be executed on the network, Cgi rendering. the main goals of this release are to validate the task registry, and basic task definition scheme.

the developers want to integrate ipfs for decentralized storage, a basic reputation system for the providers involved, and docker integration in the network.

89

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Figure 7-5. The Golem technology stack

Three main components of the Golem stack are shown in Figure 7-5. Software developers create applications that automate routine tasks and publish them on the application registry. For instance, a time-limited rendering application can eliminate the need for any complicated transactions or task tracking. The buyer will simply make a single-use deposit and use the application until the time is up. When buyers use applications from the registry, the revenue generated is used to compensate the developers for their app. The registry contains two types of applications: verified apps from trusted developers and new apps from unconfirmed developers. Eventually verifiers pool in the newly uploaded applications and track them closely for any suspicious activity. After a few uses, the new applications also achieve verified status. The developers also receive minor compensation from the execution of these applications on the provider nodes. Providers requisition the hardware for apps and the lion’s share of revenue generated from running a task is given back to the providers (miners). The transaction mechanism assures the proper delivery of funds to the appropriate party and the buyer is charged for task execution by the same mechanism.

Application Registry

The application registry provides requesters with a massive repository to search for specific tools or applications fitting their needs. The registry is an Ethereum smart contract among three entities: the authors, validators, and providers. What’s the design rationale behind adding these three entities? For general-purpose computing, the code is isolated in a sandbox and then executed with the bare minimum privileges.

Potential software bugs could wreak havoc in a provider’s sandbox, however, executing malicious code in a virtual machine with the intention of escalation of privileges, or even take over the machine. That’s why 90

Chapter 7 ■ ethereum tokens: high-performanCe Computing

sandboxing alone is not enough for Golem. One could ask whether the code can be evaluated automatically for safety and security. Theoretically, this is not plausible. We cannot determine the outcome of a complex algorithm before executing it due to the halting problem.

■ Note the halting problem is the problem of determining whether a program will finish running or continue to run forever given an arbitrary computer program and an input.

To enable secure and trusted code execution on host machines, the application registry is split among three parties that are responsible for maintaining integrity of the network. Authors publish decentralized applications to the registry, validators review and certify DApps as safe by creating a whitelist, and providers maintain blacklists of problematic DApps. Validators also maintain blacklists by marking applications as malicious or spam, and providers often end up using a blacklist from validators. Similarly, providers can preapprove certain Golem-specific applications (certified by validators) to execute on their nodes.

Providers can also elect to curate their own whitelists or blacklists. The default option for Golem is to run using a whitelist of trusted applications. The first set of apps will be verified by the developers to kickstart Golem, however, after the initial distribution, the network will rely on validators. On the other hand, providers can also take the approach of relying only on blacklists. This approach has the advantage of maximizing the reach of a provider (to the marketplace) and offering a wide range of applications that can be executed on a node. Eventually, providers will become specialized and fine-tune their nodes to be incredibly efficient at running one kind of task. This allows the providers more control over what runs on their nodes and custom hardware options for a computing farm. Ultimately, this option is available to developers who want to maximize their profits and are willing to run dedicated machines with bleeding-edge software.

In a decentralized network like Golem, we will see a divide between traditional and vanguard validators. Traditional validators will maintain a set of stable applications that perform very routine tasks.

For instance, a request for a complex 3D rendering can launch a preemptible rendering instance on a provider’s nodes. Once the job is completed, the instance will terminate to free up memory and send the output to the requester. On the other hand, vanguard validators will include and approve software that pushes the boundaries of what is possible with Golem. Running new and experimental software on a provider’s node will require better sandboxing and hardening of the virtual machine. But these features can be a premium add-on running on special nodes offered by a provider. Monetizing the premium add-ons will also disincentivize scammers and malicious entities. Overall, this design approach makes the network more inclusive, costly for scammers, and innovative for developers.

Transaction Framework

The transaction framework can be considered analogous to Stripe, an API for monetizing applications running on Golem. After the crowdsale, the network will use Golem Network Token (GNT) for all transactions between users, to compensate software developers and computation resource providers. The transaction framework is built on top of Ethereum, so it inherits the underlying payment architecture and extends it to implement advanced payment schemes such as nanopayment mechanisms and transaction batching. Both innovations mentioned here are unique to Golem, so let’s talk about why they are necessary to the network.

To power microservices, Golem will have to process a very high volume of small transactions. The value of a single payment is very low and these payments are also known as nanopayments. However, there is one caveat when using nanopayments: The transaction fees cannot be larger than the nanopayment itself. To solve this problem, Golem uses transaction batching. This solution aggregates nanopayments and sends them at once as a single Ethereum transaction to reduce the applied transaction fees. For instance, Golem developers note that the cost of ten payments processed in a single transaction is approximately half the cost of ten payments processed in ten transactions. By batching multiple transactions, a significantly lower transaction fee will be passed on to a user paying for per-unit usage (per-node or per-hour) of a microservice.

91

Chapter 7 ■ ethereum tokens: high-performanCe Computing

■ Note for providers, another model to power microservices is credit-based payment for per-unit hosting.

here, the requester makes a deposit of timelocked gnt and at the end of the day, the provider automatically deducts the charges for usage. the remaining credits are released back to the requester.

In Golem, nanopayments work in the context of one-to-many micropayments from a requester to many providers that assist in completing the computational tasks. The payments carried out for microservices are on the scale of $0.01 and for such small sums, the transaction fees are relatively large even on Ethereum.

The idea here is that instead of making individual transactions of $0.01, the requester (payer) issues a lottery ticket for a lottery for a $1 prize with 1/100 chance of winning. The value of such a ticket is $0.01 for the requester and the advantage is that on average only one ticket in 100 will lead to an actual transaction. This is a probabilistic mechanism to allow nanotransactions, but it does not guarantee that one given Golem node will be compensated adequately if the number of tasks computed is small. Bylica et al. provided the mathematical background to assuring fair distribution of income from this lottery system as the network expands, adding more requesters and providers. Essentially, as the number of tasks by requesters increases, the income a node generates from probabilistic lottery rewards will approach the amount it would receive if being paid with individual transactions.

The lottery system to be implemented in Golem is much more predictable for the providers than a completely probabilistic scheme. The provider is assured that among the tickets issued to reward the providers of a single task, there are no hash collisions and only one ticket is winning. Moreover, if there are 100 providers participating in the lottery payment, then the nanopayment protocol guarantees that the task would only have to be paid out once. Bylica and collaborators discussed a few counterclaim mechanisms in place to prevent malicious entities from claiming to be lottery winners and cashing out. A brief sketch of the lottery process is provided as follows. After a task has been completed, the payer initiates a new lottery to pay out the participating providers. The payer creates a lottery description L that contains a unique lottery identifier and calculates its hash h(L). The hash is written to the Ethereum contract storage. The payer also announces the lottery description L to the Golem network, so that every participating node can verify that the payment has the correct value and check that h(L) is indeed written to the Ethereum storage. The winner of a lottery payout can be uniquely determined by cross-referencing a given description L and a random value R that is unknown to all parties except the lottery smart contract. After a certain amount of time, if the reward has not been claimed, the smart contract computes the winning provider’s address (given the L and R) and transfers the reward from the contract’s associated storage to the winner. The hash of the lottery h(L) is also removed from the contract storage and a new lottery payout cycle can now begin. The nanopayment-based lottery payment system is illustrated in Figure 7-6.

92

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Figure 7-6. Nanopayment-based lottery payment system

93

Chapter 7 ■ ethereum tokens: high-performanCe Computing

On a macroscale, Andrzej Regulski wrote a post describing some of the economic principles behind GNT and long-term appreciation in value of the token enabled by this transaction framework. The most pertinent items from his post are quoted here:

GNT will be necessary to interact with the Golem network. At first, its sole role is to enable the transfer of value from requestors to providers, and to software developers. Later on, the Transaction Framework will make it possible to assign additional attributes to the token, so that, for example, it is required to store deposits in GNT.

The number of GNT (i.e., the supply) is going to be indefinitely fixed at the level created during the Golem crowdfunding. No GNT is going to be created afterwards.

A depreciation or appreciation of the token is neutral to the operations of the network, because users are free to set any ask/bid prices for compute resources in Golem, thus accommodating any fluctuations in GNT value.

The constant amount of tokens will have to accommodate a growing number of

transactions, hence increasing the demand for GNT. With a reasonable assumption that the velocity of the token (i.e., the number of transactions per unit of the token in a specific period) is constant over time, this conclusion can be drawn from the quantity theory of money. This, in turn, means that the overall success and growth of the Golem network implies a long-run appreciation of the GNT.

This payment framework can also be used as a fallback mechanism for conflict resolution. If a task challenge remains open after going through the traditional Golem mechanics, we need a method for hard resolution (as we saw in the ECM section). Here, we can use a TrueBit-style “trial” for final resolution.

TrueBit is a smart-contract-based dispute resolution layer built for Ethereum. It integrates as an add-on on top of the existing architecture for Ethereum projects such as Golem. The design principle for TrueBit is to rely on the only trusted resource in the network to resolve a dispute: the miners. A hard resolution using TrueBit relies on a verification subroutine involving limited-resource verifiers known as judges.

In TrueBit’s verification game, there are three main players: a solver, a challenger, and judges. The solver presents a solution for a task, a challenger contests the presented answer, and the judge decides on whether the challenger or solver is correct. The purpose of using the verification subroutine is to reduce the complexity of on-chain computation. This is done as the game proceeds in rounds, where each round narrows down the scope of computation until only a trivial step remains. This last step is executed on-chain by a smart contract (judge) who issues the final verdict on which party is correct. Note that the judges in this scheme are constrained in terms of computational power. Therefore, only very simplistic computations are ultimately carried out on-chain for the judge to make a ruling. At the end of this game, if the Solver was in fact cheating, it will be discovered and punished. If not, then the Challenger will pay for the resources consumed by the false alarm. We provide an outline sketch of the verification game as follows. A visual guide to the verification game is provided in Figure 7-7.

■ Note the verification game works iteratively, with a reward structure benefiting the verifiers (challengers) who diligently search for errors. accurate detection and eventual verification of errors results in challengers being rewarded with a substantial jackpot.

94

Chapter 7 ■ ethereum tokens: high-performanCe Computing

Figure 7-7. Verification game

To begin the trial, a challenger requests verification of a solution. The solvers get to work and provide their solutions. Verifiers get paid to check whether the solvers are providing accurate solutions for off-chain processing. If there is still a dispute, judges will perform on-chain processing looking for the first disagreement with the proposed solution. In the end, either the cheating solver is penalized, or the challenger will pay for the resources consumed by the false alarm.

TrueBit is an advanced on-chain execution mechanism compared to the on-chain verification implemented in ECM. It allows the ability to verify computations at a lower cost than running the full instruction because only one step is executed on-chain. Using TrueBit as a dispute resolution layer for off-chain computations, smart contracts can enable third-party programs to execute well-documented routines in a trustless manner. Even for advanced machine learning applications such as deep learning that require 95

Chapter 7 ■ ethereum tokens: high-performanCe Computing

terabytes of data, as long as the root hash of training data is encoded in the smart contract, it can be used to verify the integrity of off-chain computations. The reason TrueBit works for large data sets is simply due to the Merkle roots mapping the state of the network at a given time t, and a challenger’s ability to perform binary search. For a project like Golem aiming to achieve HPC toward a variety of use cases, TrueBit can enable large data sets to be used off-chain with an on-chain guarantee of accurate outputs.

Supercomputing Organized by Network Mining

SONM is a decentralized implementation of the fog computing concept using a blockchain. To understand how SONM works within the framework of fog computing, we first need to define two networking concepts: IoT and IoE. The European Commission defines the IoT architecture as a pervasive network of objects that have IP addresses and can transfer data over the network. IoT lends itself to the broader concept of Internet of Everything (IoE), which provides a seamless communication bus and contextual services between objects in the real world and the virtual world. IoE is defined by Cisco as:

The networked connection of people, process, data, and things. The benefit of IoE is derived from the compound impact of connecting people, process, data, and things, and the value this increased connectedness creates as “everything” comes online.

Whereas IoT traditionally refers to devices connected to each other or cloud services, IoE is an umbrella term for a heavier focus on people as an essential component attached to the business logic for generating revenue. From an engineering standpoint, IoT is the messaging layer focusing on device-to-device communication and IoE is the monetization layer allowing startups to capitalize on the interaction between people and their devices. A staggering amount of data is generated from devices connected in an IoT network. Transferring these data to the cloud for processing requires enormous network bandwidth, and there are delays between when the data are generated and processing these data and receiving the results (from hours to days). A major limitation of IoT technology is that transfer delay period. Recently, a growing concern has been the loss of value on actionable data due to the transfer and processing stages: By the time we receive those processed results, it becomes too late to act on those data.

One solution presented by Ginny Nichols from Cisco is called fog computing. Fog computing reduces the transfer delay by shifting the processing stage to lower levels of the network. Instead of processing a task by offloading it to a centralized cloud, fog computing pushes high-priority tasks to be processed by a node closest to the device actually generating the data. For a device to participate in the fog, it must be capable of processing tasks, local storage, and have some network connectivity. The concept of fog computing is a metaphor for the fact that fog forms close to the ground. Therefore, fog computing extends the cloud closer to the devices (ground) that produce IoT data to enable faster action. In fog computing, data processing is said to be concentrated at the edge (closer to the devices generating data) rather than existing centrally in the cloud. This allows us to minimize latency and enable faster response to time-sensitive tasks or reduce the time within which an action can be taken on data. SONM makes this layer of fog computing available to a decentralized network of participants for processing computationally intensive tasks.

■ Note the need for faster response time to data (or business analytics) collected by large enterprises stimulated the development of real-time computational processing tools such as apache spark, storm, and hadoop. these tools allow for preprocessing of data as they are being collected and transferred. in a similar manner, fog computing seems to be an extension of iot developed in response to the need for rapid response.

96

Chapter 7 ■ ethereum tokens: high-performanCe Computing

SONM is built on top of Yandex.Cocaine (Configurable Omnipotent Custom Applications Integrated Network Engine). Cocaine is an open-source PaaS stack for creating custom cloud hosting engines for applications similar to Heroku. SONM has a complex architecture designed to resemble the world computer model of Ethereum. The SONM team designed this world computer with components that are parallel to a traditional personal computer, with one major difference: The components are connected to a decentralized network.

• CPU/processor (load balancer): In SONM’s architecture, the processor (hub) serves as a load balancer and task scheduler. The entire network can be represented as a

chain of hub nodes (or hubs) that distribute tasks, gather results from a computing

fog, pay miners for services, and provide status updates on the overall health of

the network. Each hub node is analogous to a processor’s core, and the number of

cores (or hub nodes) can be extended or reduced on the network as necessary. In

a personal computer, the cores are locally accessible to the processor, however, in

SONM, the cores are decentralized by nature. The hubs provide support to the whole

network for coordinating the execution of tasks. More specifically, hubs provide

the ability to process and parallelize high-load computations on the fog computing

cloud.

• BIOS (blockchain): For SONM, the BIOS is the Ethereum blockchain. Ethereum offers a reliable backbone for network consensus and payment mechanisms that

SONM can inherit. However, the base Ethereum implementation lacks a load

balancer and high gas costs have inspired alternatives on top of the blockchain such as SONM.

• Graphics processing unit (GPU): The GPU for SONM is the fog computing cloud.

More specifically, this fog is comprised of the miners in the SONM network that are

making computational resources available to buyers.

• Connected peripherals: The buyers in SONM are equivalent to peripheral devices.

They connect to the network and pay for any resources they use. The requests are

broadcast on the blockchain and miners select what they want to execute on their

machines. Then, the load balancer dictates the execution of tasks.

• Hard disk: Storage in SONM will be implemented using well-established

decentralized storage solutions such as IPFS or Storj.

• Serial bus: This communication module is used for message passing and data transfer within the network between the sender (a node) and working machines.

This serial bus is based on Ethereum Whisper, and enables broadcast and listening

functions for messages on the network.